As good engineers we always try to build systems that have enough capacity to service all our customers requests, all the time, anytime. It's an admirable goal and very commercially sensible, but there is no such thing as unlimited capacity and sooner or later we'll get our scalability wrong, suffer partial failure or maybe it won't be cost effective to scale up to those rare usage peaks - what will the user experience be then?

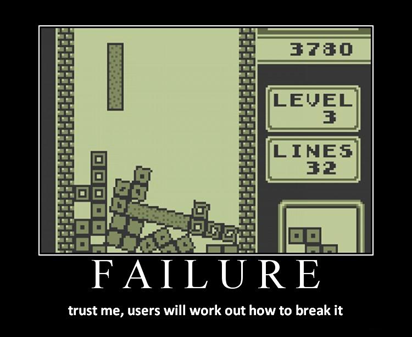

By default it will be things like; page cannot be displayed, connection timed out or our staple favorites 404 and 500 - basically the middle finger of the internet. You have to think about how your systems will behave under unexpected load and what you'd want your customers to see when it happens. There are a few things you can keep in mind when building your products that, if properly addressed, will lead to a very different user experience when things get tough:

Customise your error messages

Those unexpected peaks or partial failures are always going to happen sooner or later, so why not serve up a nicely formatted (but lightweight) page with alternative links or customer service contact details rather than a nasty, cryptic error message? It is so easy and cheap to do and makes such a huge difference to user perception that there really is no reason not to.

Queue or throttle excess traffic

What's better than a nicer error message? Some kind of service - even if it doesn't match our ideals. Queuing is a nice solution, it protects your in-flight users just like denial would and provides a better experience for those new arrivals who would otherwise push you over the edge and get an error.

A holding page, an alternative URL or maybe a nice countdown with an automatic refresh is all you need. These things can be a little more time consuming to build because your application needs to have some awareness of it's environment if you expect it to make decisions about what to serve up based on remaining capacity. There are some very easy ways to buy this back in hardware if you're using load balancers like F5, Netscaler, or Redline - a little experience will tell you how many users/connections/Mbps each node can tolerate, and you can configure an alternative page or redirect for anything above that threshold. Depending on what you have installed this can even be served directly from the device cache, making it even lower impact for your over-busy system. Queuing is just like it's real-life namesake; "line up here and wait for access to the system".

Throttling might suit certain applications better - particularly API's with a heavy non-human user population, as client applications might not understand queuing messages, holding pages or redirects returned from the oversubscribed feature but they'll be less sensitive to a general slowdown. Throttling, as I use it here, is about reducing the maximum share of total system capacity any individual can consume in the hope that this will allow more total individuals to use the system concurrently. The theory is that once we hit a certain threshold, if we flatten out everyone's usage, the big consumers will be held back a little (but still served) and the rest of us can still have a turn too. Like most ideas here, this can be implemented in a variety of places in your stack; queries/sec or concurrent data sets searchable in the database, concurrent logons in the middle tier, TPS in the application, GETs/POSTs on the front end or even number of concurrent connections per unique client IP address at the network edge.

Where is the right place to impose planned limits? I'd suggest multipoint coverage, but to start with think about your most critical constraint (what dies first as usage climbs?) and then do it at the layer above that one!

Fail gracefully

This is one of the toughest things to do with a distributed system; your features need to be aware of their environment both locally and on remote instances and they need a way to bring up/take down/recycle themselves and each other. If your system suffers a partial failure, or load is climbing towards the point marked "instability", then you need to make a decision. Do you bring up or repurpose more nodes to handle the same feature? Redirect a percentage of requests to another cluster/DC or trigger any of the other techniques we talked about above? Great if you can, but if you can't, it might be time to use those [refactored to be friendly] error messages.

Turning away users by conscious decision may rub us up the wrong way at first glance, but depending on how your system behaves under excessive load it might actually be for the best. If you are at maximum capacity and serving all currently connected users, would new user connections result in degradation of service to those already in the system? Maybe saying no to additional connections might be frustrating for those users being turned away, but you have to weigh that against kicking off users midway through transactions, or worse still, crashing the entire system!

Graceful failure is essentially the art of predicting the immediate future of your system and handling what are likely to be excess users/transactions/connections in a premeditated fashion. First you need to know what your thresholds are; then you need very fine resolution instrumentation to tell you how close you are to them on a second-by-second basis, and finally, you need an automated way to respond to impending trouble - because humans are too slow to prevent poor customer service in busy systems; we're better at cleaning up once the trouble has been and gone.

For example let's say you're aware of a memory utilization threshold or a maximum number of users/web node - wouldn't it be better to actively restart a service or show a holding page before you lost control of the stack? If you haven't managed to build in some kind of queuing or throttling then you might be showing an error or denying service but is that better than losing the whole system.

The last part of graceful failure somewhat overlaps with recovery-oriented computing; make sure that, upon death, the last thing you ever do is take a snapshot of what you were doing and what the environment was like. If your processes do this, then you are able to have watchdog processes (or monitoring systems) that know whether or not it's safe to restart that failed instance (or take an alternative action based on the data), you'll have an easier time diagnosing faults, and the data generated will help you keep a rolling benchmark of the thresholds in a system with a high rate of change.